Introduction

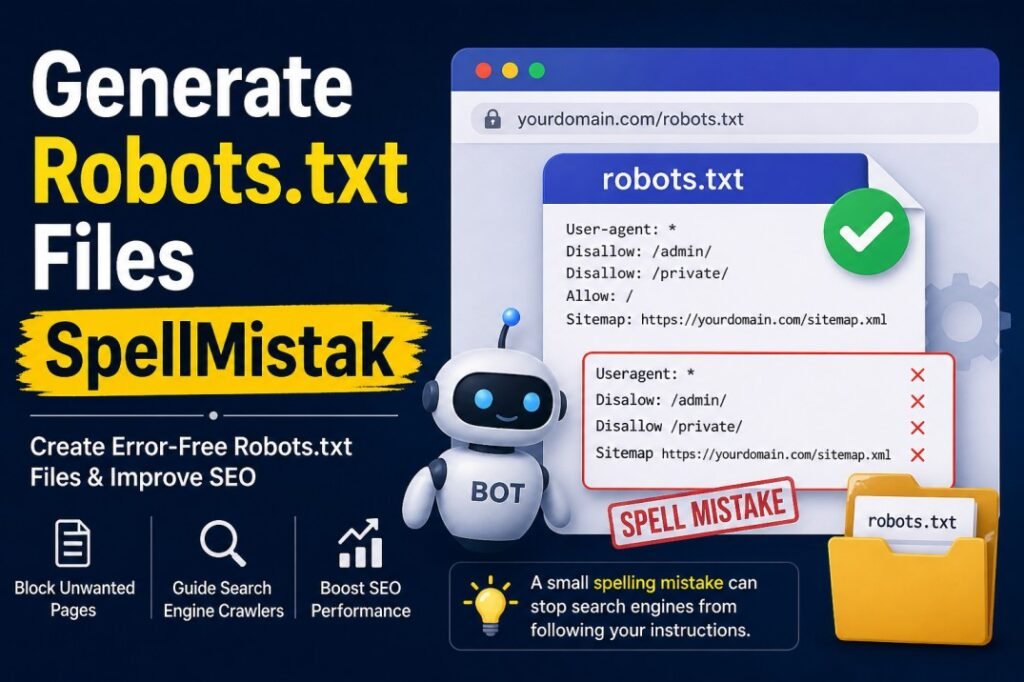

The phrase generate robots.txt files spellmistake might sound like a minor technical concern, but in reality, it can quietly damage your website’s visibility. Many site owners focus on creating a robots.txt file but overlook how small spelling errors can completely change how search engines interpret their instructions. A single typo can turn a well-structured file into something useless—or worse, harmful.

Understanding how to properly generate robots.txt files spellmistake-free is essential if you want search engines to crawl your site correctly. This article explores the issue in depth, explains common mistakes, and offers practical guidance to ensure your robots.txt file works exactly as intended.

Table of Contents

What a Robots.txt File Really Does

A robots.txt file is one of the first things search engine crawlers check when they visit your website. It acts like a set of instructions, telling bots which pages they can access and which ones they should avoid.

When you generate robots.txt files spellmistake-free, you’re essentially creating a clear communication channel between your website and search engines. If done correctly, it helps prioritize important content and protects sensitive areas. If done incorrectly, it can block valuable pages or expose sections you meant to hide.

Why Generate Robots.txt Files SpellMistake Matters More Than You Think

It’s easy to assume that minor spelling errors won’t have much impact. After all, humans can understand typos. Search engines, however, are not forgiving in the same way.

When you generate robots.txt files spellmistake issues like writing “Disalow” instead of “Disallow” or “Useragent” instead of “User-agent” can cause the entire rule to be ignored. This means your instructions won’t work at all.

In real-world scenarios, this can lead to:

Unintended crawling of private directories

Important pages being skipped

Duplicate content problems

Reduced search visibility

These issues often go unnoticed for weeks or even months, making the problem even more damaging.

Common Mistakes When Creating Robots.txt Files

One of the biggest challenges people face when they generate robots.txt files spellmistake-free is attention to detail. Even experienced developers sometimes overlook small syntax errors.

A common mistake is incorrect directive spelling. Words like “Disallow” and “Allow” must be written exactly as expected. Another issue is missing punctuation, such as forgetting the colon after “User-agent”.

Spacing also matters more than people think. Extra spaces or misplaced characters can confuse crawlers. Additionally, incorrect folder paths can make your rules ineffective, even if the spelling is perfect.

These mistakes might seem small, but they can completely change how your file behaves.

The Real Impact of a Small Typo

Imagine spending months building content and optimizing your website, only to discover that a simple typo prevented search engines from crawling your most important pages.

When you generate robots.txt files spellmistake errors, the consequences are often silent. There’s no immediate warning, and everything may appear normal on the surface. However, behind the scenes, search engines may not be following your instructions at all.

This can lead to indexing problems, where pages you want to rank are ignored, while less important pages get attention. Over time, this imbalance affects your site’s overall performance.

How to Avoid Robots.txt Spell Mistakes

The best way to avoid problems when you generate robots.txt files spellmistake-free is to keep things simple and double-check everything.

Start by writing your file using standard directives only. Avoid unnecessary complexity. The more complicated your file becomes, the higher the chance of making a mistake.

After creating the file, review it carefully. Look for spelling errors, formatting issues, and incorrect paths. It’s also helpful to test your file using reliable tools before uploading it to your website.

Consistency is key. Once you establish a correct structure, reuse it as a template for future updates.

Testing Your Robots.txt File

Testing is an essential step that many people skip. Even if you believe your file is correct, it’s always worth verifying.

When you generate robots.txt files spellmistake-free and test them properly, you can ensure that search engines interpret your instructions as intended. Testing tools allow you to simulate how crawlers read your file and highlight any errors.

This process not only catches spelling mistakes but also helps identify logical issues, such as blocking pages unintentionally.

Balancing Control and Accessibility

A well-written robots.txt file is not about blocking everything. It’s about guiding search engines toward your most valuable content while keeping unnecessary or sensitive areas out of reach.

When you generate robots.txt files spellmistake-free, you maintain that balance. You allow search engines to focus on what matters most without creating confusion.

Over-restricting access can be just as harmful as making mistakes. That’s why clarity and precision are so important.

Human Oversight Still Matters

Automation tools can help generate robots.txt files, but they are not perfect. Relying entirely on automated solutions can sometimes introduce errors, especially if you don’t review the output.

Human oversight is crucial. When you personally check your file after using a generator, you’re more likely to catch issues that tools might miss.

This combination of automation and manual review is the safest approach to avoid generate robots.txt files spellmistake problems.

FAQ

What does generate robots.txt files spellmistake mean?

It refers to errors made while creating a robots.txt file, especially spelling or syntax mistakes that prevent search engines from understanding the instructions.

Can a spelling mistake really affect my website?

Yes, even a small typo can cause search engines to ignore your rules completely, leading to crawling and indexing issues.

How can I check for mistakes in my robots.txt file?

You can review the file manually and use testing tools to ensure everything is working correctly.

Should I block all pages in robots.txt?

No, the goal is to guide search engines, not block everything. Only restrict access to pages that don’t need to be crawled.

Is it better to create robots.txt manually or use a generator?

Both methods work, but even if you use a generator, you should always review the file to avoid mistakes.

Read More Articles: Tech Hacks PBLinuxGaming

Conclusion

The topic of generate robots.txt files spellmistake may seem technical, but its impact is very real. A small typo can quietly disrupt your website’s performance, affecting how search engines crawl and index your content.

By paying close attention to spelling, formatting, and testing, you can ensure your robots.txt file works exactly as intended. It’s a small effort that delivers long-term benefits.

In the end, precision matters. When your instructions are clear and error-free, search engines can do their job effectively—and your website has a much better chance of reaching its full potential.